Core Workflow

Devplan moves a feature from idea to implementation-ready code across nine steps. The goal throughout is to preserve context — decisions made at the planning stage are still available when an engineer opens their AI coding assistant.

| Step | What happens | Your involvement |

|---|---|---|

| 1. Input | Feature idea submitted in any format | Submit text, file, mockup, or issue link |

| 2. Discovery | Planning Agent asks targeted questions | Answer questions in plain language |

| 3. PRD Generation | Devplan writes and self-reviews a PRD | Review and approve |

| 4. User Stories | Feature broken into sized, scored stories | Review stories and Agentic Scores |

| 5. Technical Design | Implementation guidance generated per story | Review technical tasks |

| 6. PM Tool Sync | Push structure to Linear or Jira | Trigger sync from the Build Plan |

| 7. Coding Prompts | Context-rich prompts generated per story | Copy prompt to your AI IDE |

| 8. CLI Integration | Pull context into your local IDE | Run devplan specs start in your terminal |

| 9. Monitoring | Track progress in the Runs view | Review status and blockers |

Step 1: Input and context processing

Devplan accepts a wide range of input formats.

| Input type | Examples |

|---|---|

| Text | Feature ideas, customer feedback, bug reports, existing specs |

| Visual | UX mockups, design files, user flow diagrams |

| Data | Analytics insights, interview notes, market research |

| Issue links | Jira or Linear issues pasted into the feature creation form |

Before any questions are asked, Devplan's Context Engine analyzes your input against your connected repository — examining codebase architecture, existing patterns, current product features, team conventions, and historical project decisions.

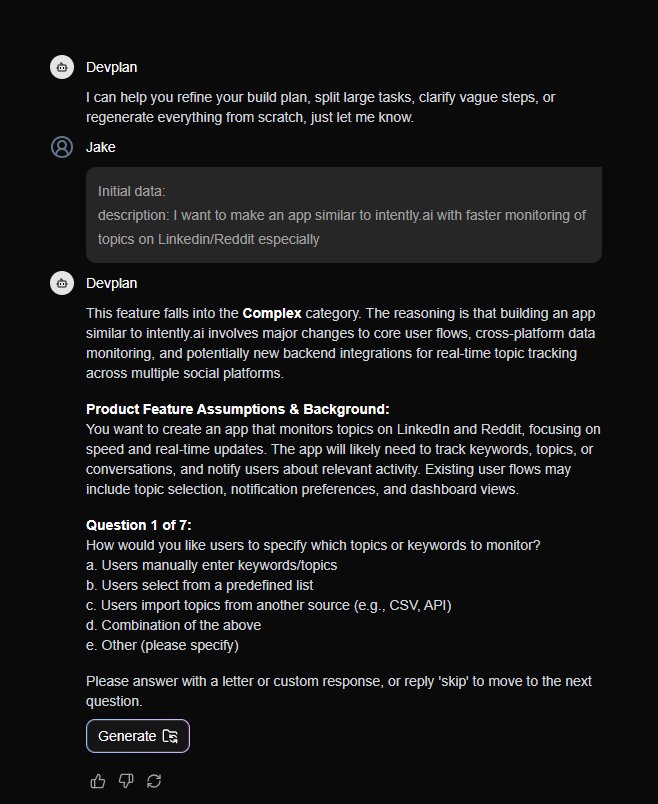

Step 2: Agent-guided discovery

The Planning Agent asks questions specific to your implementation rather than generic planning questions, because it already knows your tech stack and architecture.

This process typically surfaces 3-5 critical requirements that would otherwise be discovered during implementation, when they are significantly more expensive to address.

Step 3: PRD generation

Devplan generates a PRD covering an executive summary, user flows, key requirements, and out-of-scope items. It also includes integration points with your existing systems, code architecture recommendations, and a risk assessment grounded in your actual codebase.

The Review Agent evaluates the PRD before you see it:

| Quality check | What it evaluates |

|---|---|

| Completeness | Are all aspects of the feature covered? |

| Clarity | Can engineers implement this without follow-up questions? |

| Feasibility | Are the requirements achievable given your architecture? |

| Alignment | Does this fit your existing product strategy? |

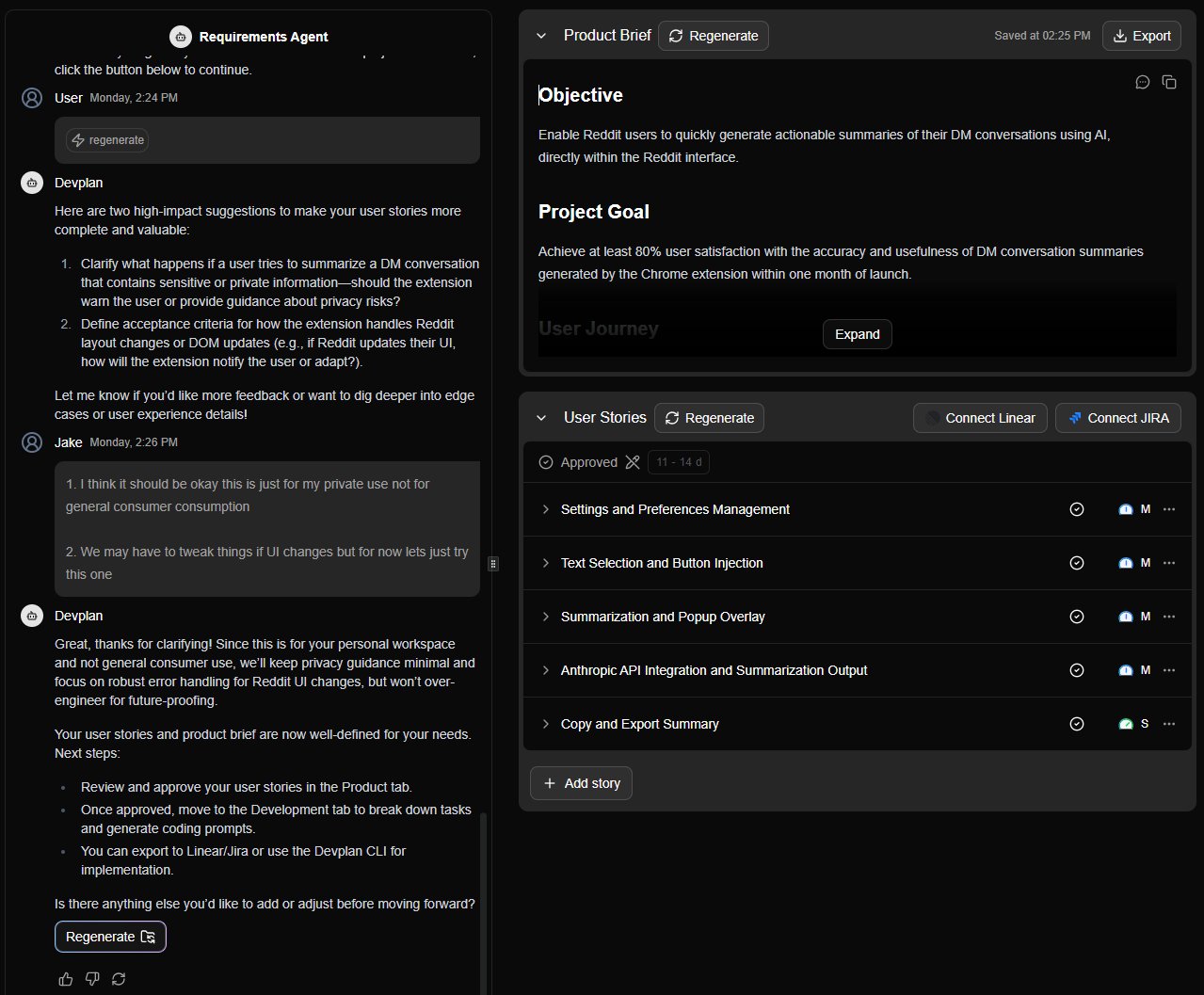

Step 4: User stories

Devplan breaks the PRD into implementation-ready user stories with complexity estimates calibrated to your actual codebase.

Each story receives an Agentic Score — a measure of how likely an AI coding agent is to implement the story correctly without human correction.

| Score | What it means | Recommended action |

|---|---|---|

| High | Well-defined, low ambiguity | Good candidate for direct AI implementation |

| Medium | Some ambiguity present | Review and refine before handing to an agent |

| Low | Complex or underspecified | Decompose or add detail before using AI |

Low Agentic Scores are useful signal, not a problem. Use them to identify which stories need more detail before running them through your AI IDE.

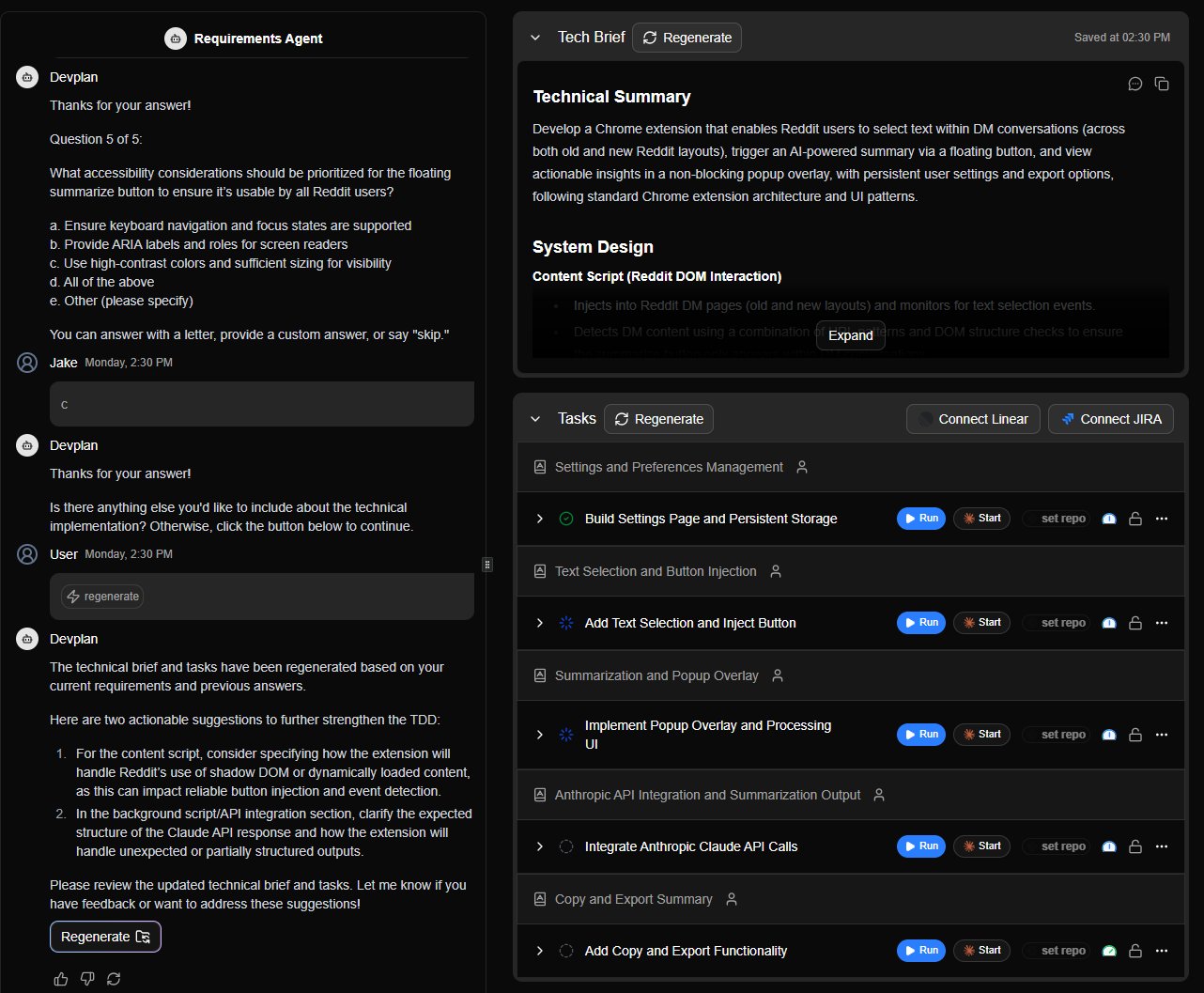

Step 5: Technical design

For each user story, Devplan generates implementation guidance specific to your codebase.

| Area | What is provided |

|---|---|

| Architecture integration | How new code fits into existing modules |

| Database changes | Schema additions or modifications required |

| API surface | Endpoints that need creation or modification |

| Dependencies | Service interactions and external dependencies |

| Technical specs | Component structure, state management, testing strategy |

Step 6: PM tool sync

| Devplan object | Linear | Jira |

|---|---|---|

| Project | Linear Project | Jira Epic |

| User Story | Linear Issue | Jira User Story |

| Technical task | Linear Sub-issue | Jira Task |

Syncs are one-directional. Devplan pushes to your PM tool. Changes made in Linear or Jira after export are not reflected back in Devplan.

Step 7: AI coding prompts

Each user story generates a prompt containing exact file paths, codebase-specific patterns, integration points with existing code, and edge case considerations.

## Project Context

- Next.js 14 app with App Router

- PostgreSQL + Prisma ORM

- Tailwind CSS + Shadcn UI

- TypeScript throughout

## Current Task

Implement user authentication (login/register)

with email/password and session management.

## Files to Reference

- /src/components/ui/* (existing UI components)

- /src/lib/validations.ts (validation patterns)

- /prisma/schema.prisma (database schema)

Step 8: CLI

The CLI bridges the Devplan web app and your local AI IDE. Copy the command from any story's prompt popover and run it in your terminal.

See the CLI Cheat Sheet for the full command reference.

Step 9: Monitoring

| What Devplan tracks | Details |

|---|---|

| Story completion rates | Progress against the planned build |

| Time vs. estimate | Deviation from complexity estimates |

| Scope changes | New items added relative to original plan |

| Blockers | Dependency issues and stalled tasks |

The Run Button executes tasks in the cloud and shows a live view of active runs, assignees, and linked pull requests.